The Mean Time Between Failures (MTBF) is an important reliability metric that can help you determine the expected lifetime of your hard drive. Calculating the MTBF allows you to make informed decisions about how to manage and maintain your storage systems. In this comprehensive guide, we will walk through the key steps for calculating hard drive MTBF.

What is MTBF?

MTBF stands for Mean Time Between Failures. It is a reliability measurement that indicates the average time a device or component functions before failing. MTBF is commonly used for repairable systems, such as hard disk drives, that can be maintained and restored to an operational state after a failure occurs.

For hard drives, MTBF is an estimate of how long, on average, a drive will operate before a critical failure occurs that requires replacing the drive. It is expressed in units of hours. A drive with a higher MTBF has a lower failure rate and higher reliability than a drive with a lower MTBF.

How is MTBF calculated?

The MTBF measurement is calculated using statistical analysis and reliability testing data. Manufacturers test samples of newly designed hard drive models under various operating conditions until a significant number fail. They record the time to failure for each drive tested.

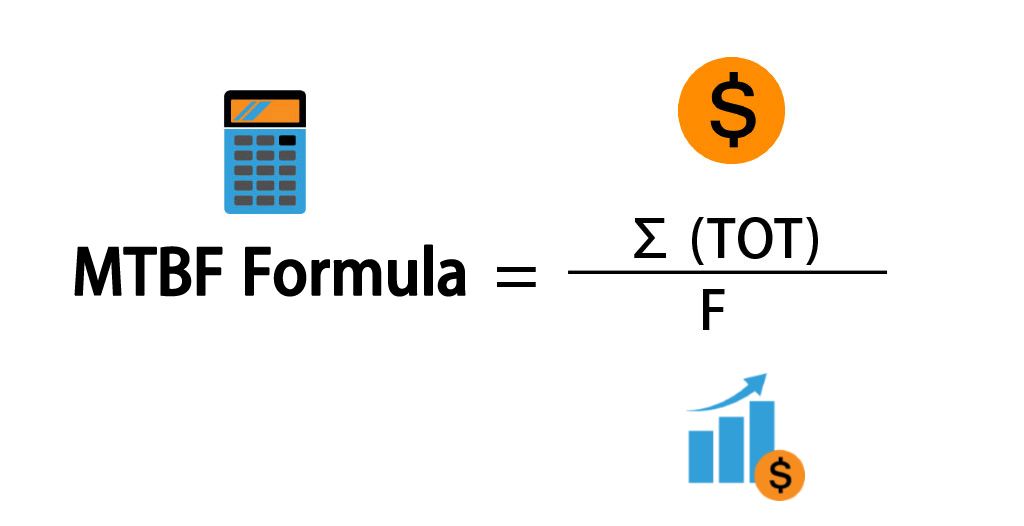

From this reliability testing data, the MTBF can be calculated using the following formula:

MTBF = Total Operating Hours / Number of Failures

Total operating hours is the cumulative time recorded across all drives tested until they fail. The number of failures is the total number of drives that failed during testing.

For example, if 100 identical hard drives were tested, and they accumulated 350,000 hours of operation before 35 of them failed, the MTBF would be:

MTBF = 350,000 hours / 35 failures = 10,000 hours

This example MTBF of 10,000 hours means that the average time between failures for this model hard drive is 10,000 hours of operation.

MTBF estimates versus real-world results

It’s important to understand that the MTBF is an estimate based on lab testing conditions. The actual lifetime and failure rate of a production hard drive may differ in real-world operating environments. Factors like drive workload, temperature, quality control, and handling can impact the actual reliability and lifespan.

Published MTBF specs are useful for comparing the predicted durability of different hard drive models. However, the actual MTBF calculated from drives operating in the field is considered a more accurate reliability metric.

Using MTBF to predict hard drive failure rates

While MTBF provides an indication of the average failure interval, it can also be used to calculate the annualized failure rate (AFR) for a drive model. AFR measures the percentage of drives that are expected to fail in a year.

AFR is calculated from MTBF as follows:

AFR = 8760 (hours in a year) / MTBF

For example, if a drive has an MTBF of 100,000 hours, its AFR is:

AFR = 8760 / 100,000 = 8.76%

This means that out of a population of those drives, about 8.76% would be expected to fail per year. As the MTBF goes up, the AFR goes down.

MTBF factors for hard drives

Many design, manufacturing, and usage factors contribute to determining the MTBF for a hard drive model. Here are some of the key factors:

Drive capacity

Higher capacity hard drives tend to have a lower MTBF rating. As capacity increases, the recording density also increases. With bits packed more tightly, there is less tolerance for issues like thermal expansion that can interfere with read/write heads.

Rotational speed

Faster spinning hard drives tend to have lower MTBFs. The rotational speed places more physical stress and friction on components like the spindle motor and platters.

Component quality

The quality and durability of all hardware components – like the motor bearings, arm actuator, and onboard controllers – directly impacts reliability and MTBF.

Firmware

Refined and debugged firmware that controls all drive operations leads to more stable performance and fewer interruptions that contribute to wear.

Workload

Heavier workloads with more drive activity tend to wear components faster. MTBF specs may be lower for drives marketed for enterprise applications.

Environmental stress

Exposure to factors like high temperatures, moisture, shock/vibration can negatively impact MTBF. Enterprise drives are tested under more strenuous environmental conditions.

Using MTBF in hard drive deployments

Understanding MTBF specs can help guide hard drive selection and deployment decisions:

- Compare MTBF values of different drives when choosing models to purchase.

- Estimate annual failure rates to determine spare drive requirements.

- Calculate the drive replacement interval needed to maintain availability.

- Determine whether replacement schedules should be based on elapsed time, power-on hours, or other usage metrics.

- Evaluate operational practices to minimize environmental stress and workload on drives.

With critical systems like storage servers, using drives with higher MTBF ratings can maximize uptime. For less crucial applications, drives with lower MTBF may still provide sufficient availability at a lower cost.

MTBF standards and testing limitations

MTBF testing is not governed by a single universal standard. Manufacturers follow various established protocols that specify criteria like test sample sizes, operating conditions, workloads, failure criteria, and documentation.

Common MTBF standards bodies include:

- Telcordia SR-332 – Used by Bellcore and commonly applied to telecom hardware.

- MIL-HDBK-217 – Pioneering military standard for electronics reliability prediction.

- IEC 62380 – International standard covering reliability testing methods.

While these standards help add consistency across MTBF figures, there are still limitations to consider:

- Sample sizes are generally small compared to full production volumes.

- Accelerated stress testing aims to simulate years of operation.

- Qualification testing determines design viability, not lifespan limits.

- Ideal lab environments differ from real-world conditions.

As a statistical estimate, MTBF ratings still provide valuable insight into the engineering quality and intrinsic reliability of a drive model. But actual results may vary based on application factors.

Using actual field data to refine MTBF

The initial MTBF rating for a drive model provides a starting point. As a hard drive design matures and is deployed into service, field data becomes available to refine the MTBF estimate.

By tabulating overall accumulated drive hours and numbers of failed drives from systems in service, MTBF can be recalculated to reflect real-world experience rather than laboratory conditions. The resulting metric is known as AFR:

AFR = Total field hours / Total field failures

This AFR calculation is considered a more accurate, empirical MTBF number. The sample size encompasses the entire population of installed drives rather than just test samples. The operational conditions reflect how systems and data centers work in practice.

AFR rates derived from field data may vary from the original manufacturer MTBF estimates. They provide feedback that can be applied to future drive designs and MTBF methodology.

Caveats when using MTBF

While understanding MTBF is useful for assessing hard drive reliability, be aware of these important limitations when applying it:

- MTBF does not indicate actual lifespan – Drives may fail before or after the MTBF interval.

- It is not a guarantee of performance – Specific drives may have quality issues.

- Actual MTBF depends on operating conditions – Lab results differ from field deployments.

- Failure rate typically increases over time – As drives wear out.

- Not all failures are equal – Catastrophic vs. recoverable errors.

MTBF should be used as a general comparative metric between drive models to select suitable candidates for a given application based on predicted reliability and availability needs.

Using hard drive SMART data

While MTBF provides a baseline failure estimate, the actual health status of an individual hard drive can be monitored using S.M.A.R.T. (Self-Monitoring, Analysis and Reporting Technology).

SMART leverages onboard drive sensors and diagnostics to detect signs of impending issues. Status values and error logs can indicate factors like:

- High operating temperatures

- Excessive shock and vibration

- Bad sectors or read instability

- Degraded lubrication and friction

- Problems with the actuator or motor

If SMART monitoring shows a specific drive is experiencing above normal stress, failure may occur sooner than the MTBF estimate. So combining MTBF population statistics with SMART health monitoring provides both predictive and actual lifetime indications.

Conclusion

MTBF provides a useful prediction of expected hard drive reliability and lifespan. But actual MTBF can vary in real-world conditions. SMART telemetry coupled with field failure data helps refine MTBF accuracy. With proper drive selection, monitoring, and replacement, MTBF metrics can inform data center storage management practices to balance availability, performance, and TCO.