RAID 6 is a type of RAID (Redundant Array of Independent Disks) that uses block-level striping with double distributed parity (Wikipedia). This means that data is distributed across multiple disks, with parity information stored across disks as well to allow for fault tolerance. Specifically, RAID 6 can withstand the failure of up to two disks without losing data.

The main benefits of RAID 6 are increased fault tolerance and the ability to recover from up to two disk failures. By distributing data and parity information across multiple disks, RAID 6 can provide continued access to data even if multiple disk failures occur (PCMag). The tradeoff is potentially slower write performance compared to other RAID levels.

How RAID 6 Provides Fault Tolerance

RAID 6 provides fault tolerance through the use of parity and striping with distributed parity. Parity allows the system to reconstruct data in the event of a disk failure by calculating missing data based on the remaining data and parity information.

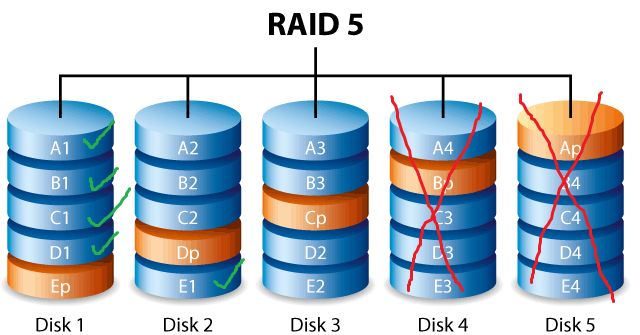

In RAID 6, the parity information is distributed across multiple disks, unlike RAID 5 which stores all parity on a single disk. The parity is calculated using two different mathematical formulas and distributed across different disks. This is known as double distributed parity.

By distributing parity across multiple disks, RAID 6 can withstand the simultaneous failure of up to two disks without data loss. If one disk fails, the remaining data and parity on the other disks can be used to reconstruct the missing data. If a second disk fails, the second set of parity can be used to recalculate the data. This provides excellent fault tolerance and uptime.[1]

In summary, RAID 6 provides fault tolerance through striping with double distributed parity, allowing it to withstand the failure of up to two disks.

[1] https://www.techtarget.com/searchstorage/definition/RAID-6-redundant-array-of-independent-disks

Number of Disk Failures Tolerated

RAID 6 is specifically designed to tolerate up to 2 disk failures thanks to having 2 dedicated parity disks [1]. The parity information is distributed across these 2 disks, allowing the array to reconstruct data even if 2 drives fail [2]. This fault tolerance provides an extra layer of protection compared to RAID 5, which can only handle 1 disk failure before data loss occurs.

The 2 disk fault tolerance makes RAID 6 well-suited for larger arrays where the probability of multiple disk failures is higher over time. By dedicating 2 disks to parity, the array can remain operational even if 2 disks completely fail at the same time or separately [3]. However, if a 3rd drive failure occurs before the failed drives are rebuilt, then data loss is unavoidable.

Likelihood of Disk Failure

Hard disk drives (HDDs) have an annualized failure rate (AFR) representing the percentage of drives that will fail in a year. According to a study by Backblaze analyzing over 100,000 consumer HDDs, the AFR can range from around 0.5% for top enterprise drives to over 2% for budget consumer models[1]. For example, the Seagate Barracuda 4TB consumer HDD had a 1.42% AFR while the HGST Ultrastar He10 10TB enterprise drive had just a 0.26% AFR.

In general, enterprise-class HDDs designed for 24/7 operation in servers and data centers have much lower failure rates than consumer-grade drives. This is due to higher quality components, firmware optimizations, and stringent design margins. Enterprise drives utilize technologies like rotational vibration sensors, ramp load technology, and humidity sensors to improve reliability.

Manufacturing techniques like helium-sealed enclosures on high-capacity drives also reduce component failures. Overall, enterprise HDDs are engineered to operate reliably under heavy workloads and last for years while consumer drives are designed simply to be inexpensive.

[1] https://www.backblaze.com/blog/hard-drive-stats-for-2019/

Calculating Chance of Dual Disk Failure

In RAID 6, data is striped across multiple drives with dual distributed parity, allowing for continued operation with up to two concurrent drive failures. To calculate the mathematical probability of dual drive failure, we need to know the individual drive failure rates.

According to IBM, the current annualized failure rate (AFR) for enterprise HDDs is around 0.7-0.8% (source). This means in a RAID 6 array of 10 drives, the chance of any single drive failing in a year is 7-8%. Using binomial probability distribution, the chance of exactly two drives failing in the same year is 0.28-0.35% (source).

This probability increases for larger drive counts and depends heavily on factors like drive age, usage patterns, vibration, temperature, and manufacturing defects. Regular monitoring and preemptive drive replacements can help minimize the likelihood of dual failures occurring before rebuilds complete.

Rebuilding After Disk Failure

When a drive fails in a RAID 6 array, the rebuild process begins automatically to repair the array and restore full redundancy. This involves using the parity data spread across the remaining drives to recalculate the data that was on the failed drive and writing it to a replacement drive.

The rebuild process for RAID 6 can be quite lengthy depending on the size of the drives and the amount of data stored. With large capacity drives, rebuilds can take many hours or even days to complete. During this time, performance of the array will be degraded as the system devotes resources to the rebuild. There is also an increased risk of a second drive failure during this intensive process, which would cause permanent data loss in some cases (Source).

To minimize downtime, larger arrays may rebuild on a priority basis, restoring critical data first. Some RAID controllers also support hot spare drives, which allows a rebuild to start immediately upon failure. Overall, the redundancy of RAID 6 provides protection against dual drive failures, but the rebuild process can be disruptive.

Best Practices to Minimize Disk Failures

There are several best practices that can help minimize the likelihood of disk failures in a RAID 6 array:

First, closely monitor the health of disks using SMART (Self-Monitoring, Analysis and Reporting Technology) or other disk health monitoring utilities. Watch for signs of impending failure like increases in reallocated sectors or read errors. Replace drives proactively before failure occurs.

Follow disk manufacturer recommendations for usage and environmental conditions. Avoid exceeding temperature, vibration, or workload limits which can shorten disk lifespan. Keep firmware and drivers up to date as well.

Lastly, proactively replace disks after 3-5 years, before the end of their expected lifespan. Disk failure rates tend to increase after 3 years. Replacing disks ahead of failure will reduce the chance of dual disk failures occurring in the RAID 6 array.

Sources:

https://www.techtarget.com/searchstorage/tip/Three-key-strategies-to-prevent-RAID-failure

https://www.stellarinfo.com/article/raid-failure-dos-and-donts.php

Alternative RAID Options

RAID 6 provides redundancy through double distributed parity, allowing for two disk failures. This makes RAID 6 preferable for setups with a large number of disks where the likelihood of multiple disk failures is higher. However, there are some alternatives to consider:

RAID 5 uses single distributed parity, so it can only handle one disk failure. The tradeoff is you gain more usable storage capacity compared to RAID 6. RAID 5 requires a minimum of 3 disks.

RAID 10 combines mirroring and striping for performance and redundancy. It can tolerate multiple disk failures as long as no more than one disk in each mirrored pair fails. The downside is it requires at least 4 disks and has a 50% storage capacity overhead.

RAID 0 provides striping but no redundancy, maximizing storage capacity and performance at the cost of fault tolerance. Data will be lost if any disk fails.

In general, RAID 6 is preferable for setups with 6 or more disks where fault tolerance is critical. RAID 10 can be an alternative if performance is the priority over storage capacity. RAID 5 provides good redundancy for smaller arrays. And RAID 0 is suitable for maximizing capacity and performance when redundancy is not needed.

Sources:

https://lemp.io/raid-configuration-servers-advantages-disadvantages-and-costs/

New Developments

There are some emerging solutions beyond traditional RAID 6 that aim to provide greater fault tolerance. Triple parity RAID is one method that adds a third parity disk to provide protection against up to 3 disk failures. Some enterprise storage vendors like Nimble Storage offer proprietary triple parity RAID implementations that can tolerate 3 disk failures with no data loss.1

In the future, erasure coding is expected to replace RAID 6 in many scenarios. Erasure coding breaks data into fragments and encodes redundant data pieces to tolerate multiple failures. It provides more efficient storage and better scalability than RAID 6. Major cloud storage providers like AWS and Azure have adopted erasure coding for large-scale storage. As new methods emerge, RAID 6 may become less prevalent over time.

There are also new software-defined and hypervisor-based solutions that move beyond traditional hardware RAID to provide distributed, virtualized redundancy and fault tolerance. Overall, the future seems to be trending away from RAID 6 specifically and more towards flexible, software-based options with parity and erasure coding.

Conclusion

In summary, RAID 6 can tolerate up to two concurrent disk failures without data loss by using dual distributed parity. This provides excellent fault tolerance for disk-based storage systems. While the likelihood of dual disk failures may seem low, it increases substantially for large disk arrays. Careful RAID management and disk health monitoring is essential to minimize the chances of multiple simultaneous failures.

Overall, RAID 6 offers a robust level of protection by allowing continuous operations even after severe disk failures. The redundant parity mechanism is an elegant solution to prevent data loss, with an acceptable tradeoff of additional parity overhead. For mission critical storage systems, RAID 6 offers peace of mind by significantly enhancing fault tolerance and reliability.