RAID (Redundant Array of Independent Disks) is a data storage technology that combines multiple disk drives into a logical unit. There are several RAID levels that provide different balances of speed, capacity and redundancy for data protection. Two common RAID levels are RAID 5 and RAID 6.

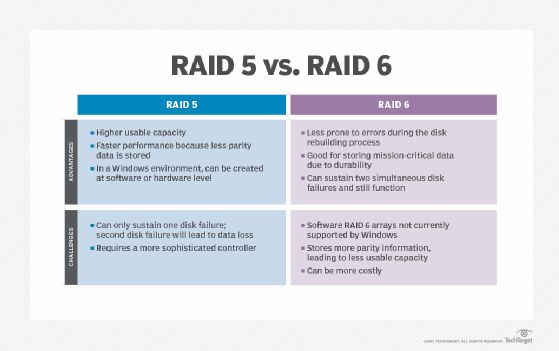

RAID 5 stripes data across all the drives in the array and uses parity to reconstruct data if a drive fails. RAID 6 also stripes data but uses double distributed parity, providing redundancy against up to two drive failures. However, the extra parity calculations in RAID 6 can impact performance compared to RAID 5. This article examines the differences in performance between RAID 5 and RAID 6.

What is RAID?

RAID (Redundant Array of Independent Disks) is a technology that allows users to combine multiple hard drives into logical units called arrays. RAID improves performance and/or reliability compared to a single hard drive.

There are several standard RAID levels that provide different benefits:

- RAID 0 stripes data across multiple drives for increased performance but offers no redundancy.

- RAID 1 mirrors data across drives for fault tolerance.

- RAID 5 stripes data and parity information across three or more drives for both faster access and redundancy.

- RAID 6 works like RAID 5 but with dual parity for additional fault tolerance.

- RAID 10 combines mirroring and stripping for both performance and redundancy.

The key distinction between RAID levels is the tradeoff between improving performance, redundancy, or both. Higher RAID levels provide more redundancy but may have slower write speeds.

How RAID 5 Works

RAID 5 works by distributing data and parity information across a set of disks (at least 3). The data is divided into stripes that get written across the disks in the array. With each stripe, a parity block is calculated using XOR and also written to disk. The parity block allows for data recovery in case one of the drives fails.

For example, with 3 disks you might have stripe 1 written across disks 1, 2, and 3. Disk 1 contains data block A1, disk 2 contains data block B1, and disk 3 contains the parity block P1. The parity block P1 is calculated as A1 XOR B1. If any one of the disks fails, the missing data or parity block can be calculated by XORing the remaining blocks. This allows RAID 5 to tolerate the loss of any single disk without data loss.

By distributing data across multiple disks, RAID 5 provides improved read performance compared to a single disk, since reads can be done in parallel. However, write performance is slower compared to a single disk, due to the parity calculation requiring each stripe write to access all disks.

Sources:

https://www.tek-tips.com/viewthread.cfm?qid=254741

How RAID 6 Works

RAID 6 uses block-level striping with double distributed parity just like RAID 5. This means data is broken up and distributed across all the drives in the array. However, RAID 6 differs in that it utilizes two parity stripes instead of one in RAID 5. This allows RAID 6 arrays to continue operating even if two drives in the array fail.

The dual parity provides fault tolerance up to two failed drives. If two drives fail simultaneously, the array can still reconstruct all data. This is because the parity information is spread across different drives. The dual parity comes at the cost of usable storage capacity since two drives are needed for parity rather than just one.

The key advantage of RAID 6 is it can withstand up to two concurrent disk failures without experiencing data loss. This makes RAID 6 the most fault tolerant option outside of mirroring (RAID 1). The tradeoff is you sacrifice more capacity for parity than you would with RAID 5.

Sources:

https://www.data-medics.com/forum/threads/best-raid-6-explanation-by-emc.1258/

Write Performance

For RAID 6, every write operation requires four I/O operations – two data writes and two parity writes (Source).

This is because RAID 6 uses double distributed parity, requiring two sets of parity calculations. In comparison, RAID 5 only requires one set of parity calculations per write. Therefore, RAID 6 write operations take longer than RAID 5 due to the extra parity computation.

One benchmark showed RAID 6 write performance was approximately 20% slower compared to RAID 5 using 750GB drives. The extra parity calculations required for the second distributed parity in RAID 6 adds overhead that negatively impacts write speeds.

Read Performance

Read performance on RAID 5 and RAID 6 is very similar with only minimal differences. According to benchmarks from UltamustM RAID, using 750 GB disk drives, RAID 6 had slightly lower read access speeds. RAID 6 achieved sustained reads of 500-525 MB/s compared to 525-550 MB/s for RAID 5. However, the difference of 25-50 MB/s is quite small in absolute terms. For most practical purposes, RAID 5 and RAID 6 can be considered to have nearly identical read performance [1].

The minimal read performance difference between RAID 5 and 6 is because parity information is not needed for reads. Both RAID levels spread data evenly across disks, maximizing parallelism. The only difference is RAID 6 has a second distributed parity disk instead of a dedicated one. This does not significantly impact reads. Overall, read speed is excellent on both RAID 5 and 6.

Rebuild Times

One key difference between RAID 5 and RAID 6 is the amount of time it takes to rebuild the array if a disk fails. With RAID 5, data and parity information is striped across disks, so only one disk worth of data needs to be rebuilt if a disk fails. But with RAID 6, two disks worth of parity data is striped, so rebuilding takes longer.

Specifically, RAID 6 rebuild times scale with the number of disks in the array. As the array size grows, rebuilds take progressively longer. This is because the rebuild process has to reconstruct not just one, but two disks worth of data. Various benchmarks show RAID 6 rebuild times can be 2-3x longer than RAID 5 depending on the array size.

For example, one test with a 24 drive array showed RAID 6 rebuild times around 140 hours, compared to 50 hours for RAID 5. The higher disk count leads to a nearly 3x difference.

The longer rebuild times can increase the risk of a second disk failure during reconstruction. Therefore, RAID 6 is more suited for large arrays where rebuild time and risk is a concern.

Use Cases

The key consideration when choosing between RAID 5 and RAID 6 is the size of the array. As the number of disks in an array increases, the chances of multiple disk failures rises exponentially. For smaller arrays (less than 10 disks), RAID 5 is generally sufficient, as the chances of two disks failing simultaneously is relatively low. However, for larger arrays (10+ disks), RAID 6 provides substantially more protection against data loss.

According to the Storage Performance Council’s “How to Select the Proper RAID Level” whitepaper, RAID 6 arrays should be strongly considered for any array with 10 or more disks. The paper states “As the system scales up in disk drive count, the chance for failure increases at an exponential rate…At around 10 drives, RAID 6 becomes a requirement for Enterprises that value their data” (Storage Performance Council, 2009).

Reddit users on r/DataHoarder generally recommend switching to RAID 6 once you reach 8-12 disk arrays. As user u/dr100 explains “I think 8 would be the normal threshold for going with raid6 instead of raid5 already, anything above 10-12 is certainly raid6 territory” (Reddit, 2021).

So in summary, RAID 5 can safely be used for arrays up to around 8 disks, while RAID 6 is recommended for 10+ disk arrays where uptime and data protection are critical.

Benchmarks

When looking at the performance differences between RAID 5 and RAID 6, it’s important to examine specific benchmarks and metrics. One detailed analysis from Dell compared RAID 5 and RAID 6 performance using an MD3000 storage array [1]. The tests showed that for sequential read operations, RAID 5 was approximately 5-15% faster than RAID 6. However, for random read operations, RAID 6 was actually slightly faster in some cases.

In terms of write performance, RAID 6 lagged behind RAID 5 more significantly. Sequential write speeds were 20-30% slower with RAID 6 compared to RAID 5. Random write operations saw an even wider gap, with RAID 6 showing 50-60% lower performance. The additional parity calculations required by RAID 6 result in this write performance penalty.

Rebuild times after a disk failure are also substantially impacted. With RAID 5, rebuilds took around 6-7 hours in these tests. For RAID 6, rebuilds took nearly twice as long at 11-12 hours. The extra parity disk in RAID 6 leads to increased rebuild times.

Overall, the benchmarks show RAID 6 does have slower write speeds than RAID 5, with the tradeoff of gaining an additional disk failure tolerance. However, read speeds are similar or only slightly slower with RAID 6. So for read-heavy workloads, RAID 6 can still offer strong performance.

Conclusions

In summary, the key differences between RAID 5 and RAID 6 in terms of performance are:

-

Write performance – RAID 6 is slower than RAID 5 as it requires more parity calculations

-

Read performance – Both RAID 5 and 6 have similar read speeds

-

Rebuild times – RAID 6 rebuilds take about twice as long as RAID 5 due to the extra parity drive

So in most cases, RAID 6 will have slower write speeds but similar read speeds compared to RAID 5. The tradeoff is that RAID 6 offers better fault tolerance and can withstand the loss of 2 drives instead of just 1.

In terms of recommendations:

-

For performance-critical applications where write speed is a priority, RAID 5 may be preferable

-

For mission-critical data that needs maximum protection, RAID 6 is likely the better option despite the performance tradeoffs

-

RAID 6 is recommended for large arrays (e.g. 8+ drives) where the risk of multiple drive failures is higher

Overall, RAID 6 provides significantly more fault tolerance at the cost of slower write speeds. The tradeoff may be worthwhile for highly important data where reliability is critical.